Evals Are NOT Audits

Recently two questions feature frequently in my conversations. Both with customers and with peers.

- Aren't evals and audits the same? They seem aligned towards the same goal?

- In a world where models have become exceedingly good, do we even need an eval layer?

The answer to both starts with a story about insurance underwriters and noise.

The 55% Problem

In 2015, Daniel Kahneman studied 48 insurance underwriters at a major company. He gave them five identical customer profiles — exact same age, same health, same risk factors. Expected output — quote a premium. The executives expected maybe 10% variation. "We all follow the same underwriting rules."

The actual variation: 55%.

One underwriter quoted $9,500. Another quoted $16,700. Same customer. Same risk. Same guidelines. Most orgs don't know their noise level because they've never measured it.

"Most orgs don't know their noise level because they've never measured it."

Meet Low Cost Sampling

Quality assurance, over decades, has settled comfortably at sampling. Call centers review 3-5% of interactions. Healthcare providers audit random charts. Financial services spot-check transactions. Everyone accepts this trade-off: "expert judgment" versus scale.

The Promise of LLM-as-Judge

When LLMs emerged, they promised a breakthrough: evaluate 100% of cases at $0.01 per review instead of $50 for human review. And for quality monitoring, it works. Eval platforms solve this well.

Why LLM-as-Judge Can't Be an Audit

The compliance officer asks: "Show me how this interaction complied with our protocols. Which specific criteria were met?"

The team pulls up the eval score: 85/100. Reasoning: "appropriate care with good documentation." They celebrate. Ready to deploy.

The Variability Problem

Fig 2Input: Same patient interaction. Same protocol. Same evaluation prompt.

Eval Score: 85/100

Reasoning: "Appropriate care with good documentation. Agent followed triage protocol correctly."

AI team celebrates. 100% coverage! Quality looks solid. Ready to deploy.

Evals ≠ Audits — Different Problems Entirely

Evals answer: "How good is this?" (Quality measurement). Audits answer: "Does this provably follow regulation X?" (Compliance proof).

Evals vs Audits

| Dimension | Status |

|---|---|

| Evals: "How good is this?" | Quality measurement — noise acceptable |

| Audits: "Does this provably comply?" | Compliance proof — must be deterministic |

| Evals: Speedometer | Track trends, some variance fine |

| Audits: Safety inspection | Binary pass/fail, no variance allowed |

| Evals: System 1 (fast, intuitive) | LLM-as-Judge works perfectly here |

| Audits: System 2 (deliberate) | Needs deterministic rule-based reasoning |

Why LLM-as-Judge Works for One but Not the Other

This isn't a criticism of LLM-as-judge. For quality monitoring (evals), LLM-based approaches are perfect. Noise is acceptable when tracking trends.

For compliance proof (audits), you need deterministic, rule-based reasoning that produces identical results every time. Kahneman called this System 1 vs System 2 thinking:

- System 1: Fast, intuitive, noisy (where LLMs operate)

- System 2: Slow, deliberate, deterministic (what audits require)

A probabilistic system can't provide deterministic proof. It's not broken — it's designed for a different use case.

The Path to Production

Most teams building AI agents go through three stages. Most are stuck between Stage 2 and 3.

Observability

"What happened?" — Logs, metrics, monitoring. Can see what the agent did.

Evals

"How good was it?" — Quality assessment, testing. LLM-as-Judge provides 100% coverage.

Audits

"Can you prove compliance?" — Regulatory verification. Deterministic proof, not probabilistic scores.

Do You Even Need an Audit Layer?

The latest breed of models have become exceedingly good at code generation, and there precisely lies the answer. The complexity and the subjectivity of the task decides the need.

Explore the full framework: Evals vs Audit — Quality Measurement vs Compliance Verification →

Evals are NOT audits. But check whether you even need audits.

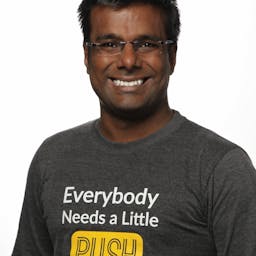

Vivek Khandelwal

2X founder who has built multiple companies in the last 15 years. He bootstrapped iZooto to multi-millons in revenue. He graduated from IIT Bombay and has deep experience across product marketing, and GTM strategy. Mentors early-stage startups at Upekkha, and SaaSBoomi's SGx program. At CogniSwitch, he leads all things Marketing, Business Development and partnerships.